The term is wrapping up, and with a student evaluation on your desk, your chance to sound off about your professor is here. You have filled in the bubbles and–love or hate your instructor–you have made your opinions clear. But where do those anonymous pieces of paper go after they leave the classroom?

The path of student evaluations

The term is wrapping up, and with a student evaluation on your desk, your chance to sound off about your professor is here. You have filled in the bubbles and–love or hate your instructor–you have made your opinions clear.

But where do those anonymous pieces of paper go after they leave the classroom?

The evaluation process may vary across campus, but it generally starts with a call to the Scanning Services Department. Scanning, as the department within the Office of Institutional Research and Planning (OIRP) is often referred to, is where student evaluations are printed and later delivered to department offices near the end of term. Kathi Ketcheson, OIRP director, said Scanning is not involved in the design of student evaluations, and that their duty is to process the forms and create a report to display data.

The School of Business Administration processes student evaluations like most PSU departments and schools. Kathy Grove, SBA scheduling coordinator, breaks down the process:

-Evaluation forms are printed, put into envelopes by administrators in the Faculty Services office, and given to professors to be taken to class during the week before finals.

-Students are given the evaluations, and professors are not allowed in the room while students fill them out. A selected student then delivers the finished evaluations to the Faculty Services office.

-Evaluations are taken to the Scanning office to have the data processed and arranged into a report.

-The reports are given to the Dean’s office to be later assessed by department chairs.

-Faculty later sees the evaluations, after grades are made available to students.

Later stages of the process often vary by department.

In the SBA’s case, area directors, who oversee certain faculty, look over the reports and then give them to the professor being evaluated.

Other parts of campus, such as the School of Fine and Performing Arts, have department chairs who assess the reports and use the data to investigate how successful a course or professor is each term.

Potential changes to how evaluations are processed

The Scanning office comprises just one employee, Liz Heichelbech, who is allowed to work only 20 hours a week under her contract, Ketcheson said.

“We’re trying to deal with a position that needs a higher FTE [full-time equivalency], and it’s been a struggle, no question about it,” Ketcheson said. “We’ve tried to improve the turnaround, and we depend on each department to make our job as easy as possible.”

Ketcheson said that her office assistant, and usually one student worker that rotates each term, works with Heichelbech to speed up the process.

Grove said the SBA has offered to send an assistant from their administrative office to Scanning in order to expedite the process.

Because each department uses a slightly different evaluation form, Ketcheson said, there isn’t a universal way to speed up the scanning process for all departments. In January 2007, she said, Provost Roy Koch formed the Institutional Assessment Council, a university-wide committee that is looking at ways to streamline the assessment process across campus, not just student evaluations.

No specific timeline has been set, Ketcheson said, though she is hopeful the committee will change the approach during the next academic year. One option could include creating a standardized evaluation for all departments, or switching over to more Web-based evaluations, which she said are easier for students to use and have a quicker turnaround.

How student evaluations are used across PSU

Sarah Andrews-Collier, chair of the theater arts department, said she uses the anonymous student surveys for “every class, every professor, every term.” She said the anonymity of end-of-term evaluations benefits the process because it reflects the average student opinion.

“It’s a blind survey. It’s more fair and protects the students who fill them out,” Andrews-Collier said about the differences between anonymous surveys and personal testimonials from students.

Andrews-Collier said data from evaluations are used whenever a faculty member comes up for a promotion, or when they are being considered for tenure.

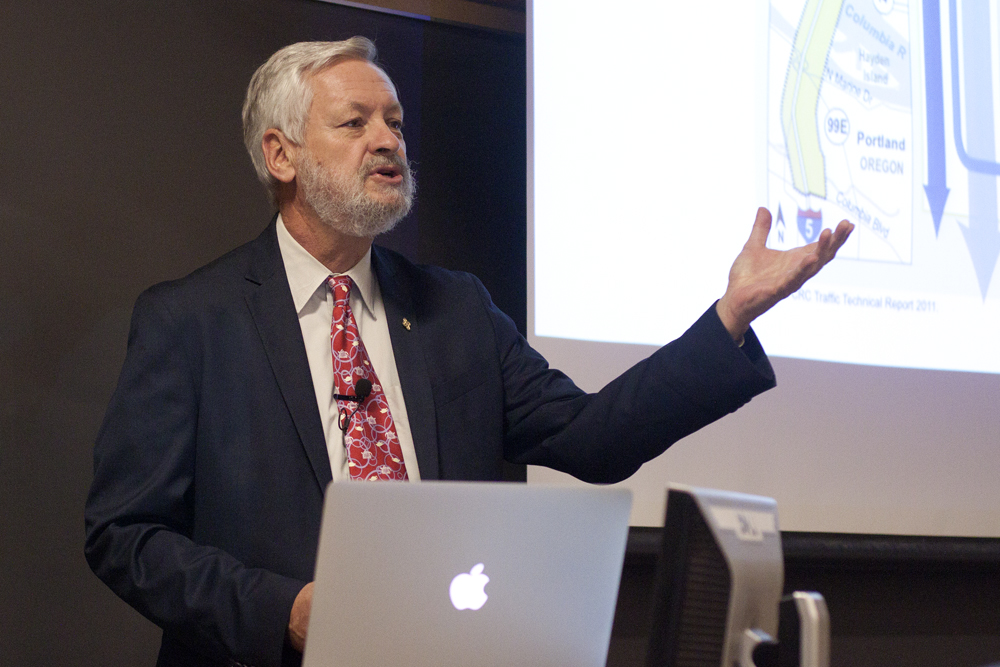

The Toulan School of Urban Studies and Planning, according to Director Ethan Seltzer, uses the evaluations to review all faculty and for the rehiring process of part-time or adjunct faculty members.

“We’re trying to use a single questionnaire for many courses and many professors, so it’s only a guide,” Seltzer said. “We try not to depend too much on it or to disregard it.”

Seltzer said there isn’t a consistent turnaround between the submission of the evaluations to Scanning and when the data report is given to the school. He said data reports are usually delivered within one term of a finished course, though it can vary depending on how many classes submit student surveys to Scanning.

A different approach

Marek Elzanowski, chair of the math and statistics department, said he prefers not to use the typical bubble-fill-in surveys. His department instead distributes anonymous short-answer questionnaires that aren’t submitted to Scanning, but reviewed internally by the department. Since the evaluations are kept in-house, the turnaround is faster, he said, with professors seeing the results within a few weeks after the end of term.

Some students will choose to ignore the surveys, Elzanowski said, but those who do respond give constructive comments. He said these comments are more useful than numbers when reviewing faculty performance.

“The idea is to give students a chance to do more than categorize their professors according to a finite number of choices,” Elzanowski said.